A/B testing and multivariate testing are two methods used to improve website performance by analyzing user behavior. Here's what you need to know:

- A/B Testing: Compares two versions of a webpage or element (e.g., Variant A vs. Variant B) to see which performs better based on a specific metric, like conversion rates. It's straightforward, works well for smaller changes, and is ideal for websites with lower traffic.

- Multivariate Testing (MVT): Tests multiple elements (e.g., headlines, images, buttons) simultaneously to determine the best combination. It's more complex, requires high traffic, and is suited for analyzing how elements interact.

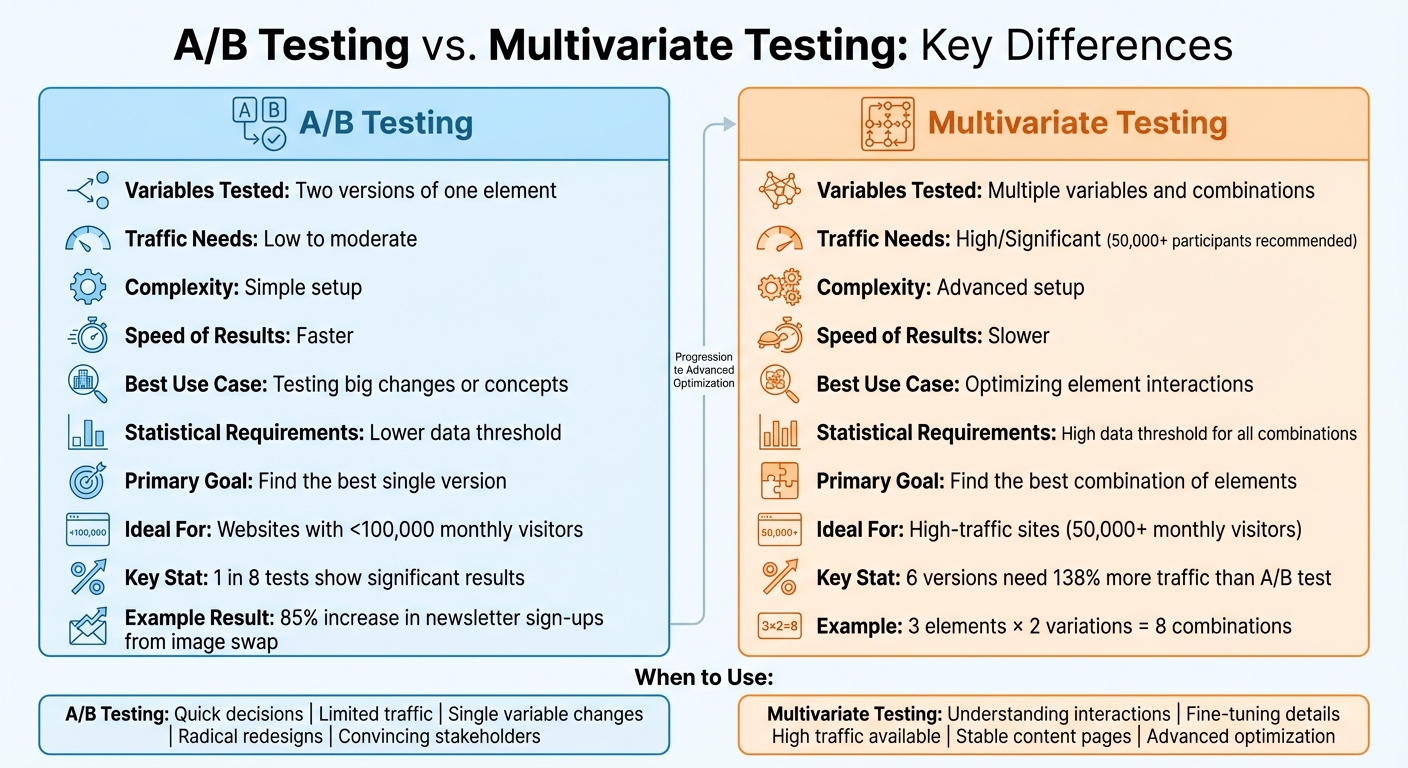

Key Differences:

- A/B testing is simpler, faster, and focuses on one variable at a time.

- MVT dives deeper, testing multiple variables but needs more traffic and time.

Quick Comparison:

| Feature | A/B Testing | Multivariate Testing |

|---|---|---|

| Variables Tested | Two versions of one element | Multiple variables and combinations |

| Traffic Needs | Low to moderate | High |

| Complexity | Simple setup | Advanced setup |

| Speed of Results | Faster | Slower |

| Best Use Case | Testing big changes or concepts | Optimizing element interactions |

If you're starting out or have limited traffic, A/B testing is better. For deeper insights into how elements work together, use MVT - but only if you have enough traffic.

A/B Testing vs Multivariate Testing Comparison Chart

AB Testing vs Multivariate Testing

sbb-itb-94eacf4

What is A/B Testing?

A/B testing, also known as split testing, involves comparing two versions of a webpage by using live traffic to determine which one performs better. Visitors are randomly shown either version, and their behavior is tracked to figure out which option is more effective.

The beauty of A/B testing lies in its straightforward approach. While it usually tests two versions, it can also handle three or four variations, whether you're making big design changes or small tweaks - like adjusting the placement of a call-to-action button. For instance, one test revealed an 85% increase in newsletter sign-ups just by swapping out an illustration. These kinds of results highlight how A/B testing can provide measurable insights into design changes, often winning over even the most doubtful stakeholders.

Let’s dive into the four-step process that makes A/B testing work.

How A/B Testing Works

The process is pretty simple: start with a hypothesis, create a control version and a variation, split the live traffic between them, and track conversion rates to determine the winner.

Benefits of A/B Testing

A/B testing comes with several perks. Its simplicity makes it beginner-friendly, and the results are easy to interpret. For websites with lower traffic, this method is particularly handy because splitting visitors into just a few groups allows tests to reach statistical significance faster. Plus, it’s a great option when you need actionable data quickly to make decisions.

For startups or brands just starting their optimization journey, A/B testing provides a reliable starting point. As Optimizely explains:

"A/B testing is also a good way to introduce the concept of optimization by testing to a skeptical team, as it can quickly demonstrate the quantifiable impact of a simple design change."

That said, like any method, A/B testing isn’t without its challenges.

Drawbacks of A/B Testing

While A/B testing has its advantages, it also has some limitations. One major drawback is its focus on a single variable at a time - or complete page designs - without shedding light on how different elements work together. For example, if one homepage design outperforms another, you may not know whether it was the headline, an image, or the call-to-action button that made the difference.

Another issue is that not every test produces meaningful results. According to AppSumo, only 1 in 8 A/B tests results in a statistically significant change. Additionally, while A/B testing works well for small-scale changes, testing more than two to four variables at once can drag out the time required to gather statistically reliable data.

What is Multivariate Testing?

Multivariate testing (MVT) is a method used to test multiple variables on a single page at the same time to see how different elements interact and influence user engagement. Instead of comparing two complete page designs like in A/B testing, MVT focuses on how specific components - such as headlines, images, or call-to-action (CTA) buttons - work together. For instance, you might find that a headline performs well with one background image but not as effectively with another.

As Corey Wainwright from HubSpot puts it:

"The multivariate test is more complicated and best suited for more advanced marketing testers, as it tests multiple variables and how they interact with one another, giving far more possible combinations for the site visitor to experience."

The standout feature of MVT is that it doesn’t just identify the best-performing version. It also uncovers how individual elements combine to create an optimal outcome.

How Multivariate Testing Works

MVT relies on a test matrix to split traffic across all possible combinations of the variations being tested. The number of combinations depends on the number of elements and their variations. For example:

- Testing three elements with two variations each results in 8 combinations (2 x 2 x 2).

- Testing three elements with three variations each results in 27 combinations (3 x 3 x 3).

Let’s say you’re testing three elements - appeal copy, images, and CTA timing - with two variations each. Your traffic would be divided among eight unique experiences:

| Element | Control | Variant 1 | Variant 2 | Variant 3 | Variant 4 | Variant 5 | Variant 6 | Variant 7 |

|---|---|---|---|---|---|---|---|---|

| Appeal Copy | A | A | A | A | B | B | B | B |

| Image | A | A | B | B | A | A | B | B |

| CTA Timing | A | B | A | B | A | B | A | B |

This setup not only identifies the best-performing elements but also highlights how they work together. However, analyzing these results requires advanced web building tools and expertise. As Christopher Santini, Senior Consultant at Oracle Digital Experience Agency, explains:

"MVT's greatest strength is it allows you to much more quickly and easily see how changes in various elements enhance or detract from one another."

This ability to analyze the interaction of elements makes MVT a powerful tool for optimizing user experiences.

Benefits of Multivariate Testing

While MVT can be complex, it offers several advantages. The biggest benefit is efficiency - you can test multiple changes at once instead of running separate A/B tests for each element. This approach also reveals interaction effects, where the combined impact of two changes is greater than their individual effects. For large-scale projects, such as homepage redesigns, which often involve using the best website building tools, MVT provides data-driven insights into which combinations of elements work best, eliminating guesswork. Instead of testing elements one by one, MVT delivers comprehensive results from a single test.

Drawbacks of Multivariate Testing

Despite its strengths, MVT comes with challenges. It requires a high volume of traffic to ensure statistical significance, as the audience is divided among many variations. For example, testing three headlines, two images, and two buttons results in 12 unique variations, each needing enough traffic to produce reliable results.

The complexity doesn’t stop there. As Christopher Santini points out:

"Winning experiences derived from MVT testing rely on the interplay between the different elements of that experience, and that interplay is disrupted if content is routinely changing."

Frequent content updates can disrupt the stable conditions MVT relies on. Additionally, these tests take longer to complete compared to A/B tests. While an A/B test might take a few days, an MVT could require weeks or even months, depending on the traffic and number of variations. These factors make MVT better suited for projects where stability and high traffic can be maintained.

Main Differences Between A/B and Multivariate Testing

Let's break down how A/B testing and multivariate testing differ. While both are essential tools for optimizing performance, they serve distinct purposes. A/B testing is all about comparing two versions of a single element or page to see which performs better. On the other hand, multivariate testing dives deeper, evaluating multiple variables at once to pinpoint the most effective combination of elements.

As Corey Wainwright from HubSpot puts it:

"The critical difference is that A/B testing focuses on two variables, while multivariate is 2+ variables."

For instance, if you're deciding between two homepage designs, A/B testing helps you pick the winner. But if you're curious about how your headline, hero image, and call-to-action button interact, multivariate testing is the way to go.

Another key distinction is complexity. A/B testing is simple to set up and track, making it beginner-friendly. In contrast, multivariate testing demands more expertise and a larger audience to ensure every combination gets enough traffic for reliable results. The table below provides a quick overview of how these methods compare:

Comparison Table

| Feature | A/B Testing | Multivariate Testing |

|---|---|---|

| Variables Tested | Two versions (A and B) of one element | Multiple variables and their combinations |

| Traffic Needs | Low to moderate | High/Significant |

| Complexity Level | Simple; easy to set up and track | Advanced; requires detailed setup |

| Statistical Requirements | Lower data threshold for significance | High data threshold for all combinations |

| Speed of Results | Quick; results come faster | Slower; more data collection needed |

| Best-Fit Scenarios | Testing major design changes or concepts | Optimizing how elements work together |

| Primary Goal | Find the best single version | Find the best combination of elements |

When to Use A/B Testing

A/B testing shines for websites with fewer than 100,000 monthly unique visitors. Since you're only dividing traffic between two versions, you can achieve statistically significant results much faster compared to multivariate testing, which spreads visitors across multiple combinations.

One of the biggest advantages of A/B testing is speed. While multivariate tests can take weeks - or even months - to conclude, A/B tests often deliver results in just a few days. This makes them perfect for evaluating single design tweaks or testing specific offers without needing advanced tools or expertise.

A/B testing is ideal for testing individual changes. Whether you're swapping a headline, experimenting with a new call-to-action button, or introducing a different hero image, this method allows you to zero in on what’s driving performance shifts. While multivariate testing examines how different elements interact, A/B testing focuses on one variable at a time, making it easier to draw clear conclusions. It’s also great for radical redesigns - like comparing a text-heavy layout to a minimalist design with a top-bar ad. The simplicity of testing two distinct options eliminates the complexity of managing multiple variables.

Another common use case is comparing specific offers. For instance, you can test whether "15% off" outperforms "free shipping" at checkout. With its straightforward setup, A/B testing provides clear insights without requiring advanced statistical knowledge or specialized tools.

Lastly, A/B testing is a powerful way to convince skeptical stakeholders. When you need to show the measurable impact of a design or content change, a simple A/B test with clear, actionable results is much more compelling than presenting data from a complicated multivariate test.

Up next, we’ll dive into when multivariate testing is the better option for deeper analysis.

When to Use Multivariate Testing

Multivariate testing works best when you have plenty of data to work with. It's ideal for high-traffic sites where multiple elements need optimization at the same time. Unlike A/B testing, which compares just two versions, multivariate testing requires significantly more visitors. For example, a test with six versions demands 138% more traffic than a typical A/B test to achieve statistical confidence. Larger tests, involving 12 or more combinations, usually need upwards of 80,000 visitors.

Traffic volume is non-negotiable. Bain & Company suggests that multivariate testing becomes practical only when you have at least 50,000 participants. Peep Laja, Founder of CXL, offers a stark example:

"If your site gets 3,000 visits per month with a 7% conversion rate, you'd need 3 years for meaningful multivariate results".

For reliable results, aim for at least 10,000 monthly visitors to the tested page, though 50,000 or more is preferable. These numbers highlight how critical statistical significance is for dependable insights.

Multivariate testing is especially useful for understanding how elements interact. It’s perfect for testing subtle changes, like tweaking the wording or font of a call-to-action, rather than experimenting with major layout overhauls. Christopher Santini, Senior Consultant at Oracle Digital Experience Agency, emphasizes this point:

"MVT's greatest strength is it allows you to much more quickly and easily see how changes in various elements enhance or detract from one another. If A/B testing was used to discover these cross-element interactions, it would take much longer and involve many more tests".

This makes multivariate testing invaluable for understanding nuanced interactions, like whether a specific headline works better with a certain image - something A/B testing can't fully address.

Reserve multivariate testing for advanced optimization efforts. It’s best suited for fine-tuning pages that already perform well. Each combination being tested needs 100–150 conversions to ensure reliable results, making this method most effective for high-traffic pages where you're optimizing specific elements rather than overhauling the entire design.

Avoid using multivariate testing on pages with frequently changing content. The results depend on stable interactions between elements. If the page content changes often, the interactions - and the insights you gain - become unreliable. Stick to pages with consistent content where you can implement and maintain the winning combination over time.

Using A/B and Multivariate Testing Together

To get the most out of your testing efforts, try combining A/B and multivariate testing in a sequential manner. Start with A/B testing to pinpoint the big-picture winners, then follow up with multivariate testing to fine-tune the details. This method helps you gather meaningful insights while managing limited website traffic effectively.

Corey Wainwright from HubSpot explains the complementary nature of these approaches:

"While an A/B test allows marketers to learn which major formatting of a site or piece of content is most engaging, multivariate allows them to zone in on which specific page elements are most engaging."

A/B tests are perfect for tackling broader questions, like whether a short form generates more leads than a long one. Once you have a clear winner, you can use multivariate testing to understand how individual elements - like headlines, images, or button colors - work together to boost performance. This step-by-step strategy connects big design decisions to detailed optimizations.

Sequential A/B tests also tend to deliver insights faster than diving straight into a complex multivariate test. Atticus Li, Applied Experimentation Lead, highlights the value of speed:

"Velocity of A/B tests almost always generates more learning than depth of MVTs."

For example, running six sequential A/B tests over two weeks each can often provide insights equivalent to a four-month multivariate test - but in far less time. This makes it a smart option for sites with less than 100,000 monthly visitors, which may not have the traffic volume needed for reliable multivariate results.

However, multivariate testing shines when you suspect interaction effects - cases where two elements work best in combination. Say you think a benefit-focused headline only performs well with a specific product image. That’s when multivariate testing becomes the go-to tool. Before starting, calculate whether your traffic can support the test using this formula:

(Page visitors/week × Traffic allocation) / Number of combinations.

This ensures your test is both feasible and statistically sound.

Conclusion

Each testing method serves a specific purpose, and the choice depends on factors like traffic volume, the urgency of results, and the level of detail you’re after. A/B testing is perfect for comparing two distinct versions, especially when making significant design changes. It’s straightforward to set up, delivers quick results, and works well for websites with lower traffic.

On the other hand, multivariate testing helps uncover how multiple elements interact, but it requires more traffic and a higher level of complexity. As Corey Wainwright from HubSpot explains:

"Only use a multivariate test if you have a significant amount of website traffic. That way, you can truly determine which components of your website yield the best results."

If you’re just starting out or working with limited traffic, A/B testing is your go-to for quick insights and initial improvements. Once you’ve established a solid direction using A/B testing, multivariate testing can help fine-tune the details, like how your headline, images, and button colors work together.

Keep in mind, no testing method will yield valuable insights without enough traffic. Whether it’s A/B or multivariate testing, the tested page needs a steady stream of visitors to produce meaningful results.

FAQs

How do I know if I have enough traffic for MVT?

Multivariate testing (MVT) demands a much larger amount of traffic compared to A/B testing to produce results that are statistically reliable. This is because MVT examines multiple variables and their combinations at the same time. To get dependable insights, make sure your website receives enough traffic to properly analyze these variations.

What should I test first in an A/B test?

Start by focusing on one element that you think will have the biggest impact on your conversion rate. For example, try testing the placement of your call-to-action button or the wording of a headline. This method allows you to quickly see which version resonates better with your audience and gives you clear direction for making improvements.

Can I run A/B tests and MVT on the same page?

Yes, it's possible to run both A/B tests and multivariate testing (MVT) on the same page, though they are usually conducted one after the other. Here's how they differ and work together:

- A/B Testing: This method compares two versions of a single element to see which one performs better. It's great for isolating and optimizing specific elements like headlines or call-to-action buttons.

- Multivariate Testing (MVT): MVT goes a step further by testing multiple elements and their combinations to determine which mix works best. However, it requires a larger volume of traffic to produce reliable results.

A common approach is to start with A/B testing to fine-tune individual components. Once you've identified the best-performing versions of those elements, you can move on to MVT to analyze how they interact and refine the overall design. Just keep in mind that MVT is more data-intensive, so ensure your page has enough traffic to support it.